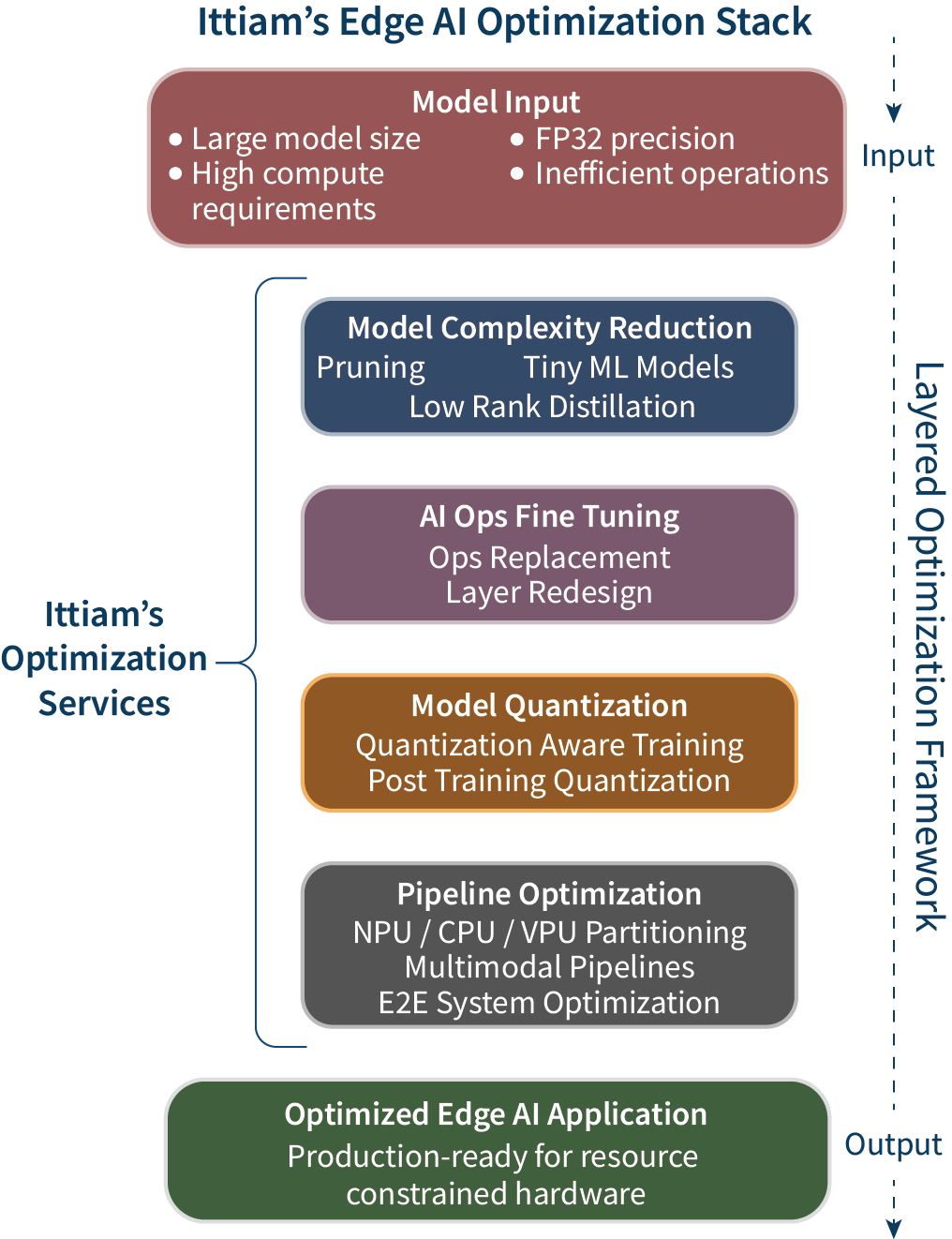

Ittiam’s Edge AI Optimization Stack

We optimize your complete AI pipeline. From video/audio decode and preprocessing through multimodal inference, post-processing, multimodal fusion, and intelligent workload distribution across CPU, GPU, NPU and DSP, our comprehensive approach unlocks performance previously impossible on constrained edge hardware, from mobile devices to broadcast equipment.

Broadcasting and streaming demand real-time multimodal intelligence. Whether you’re building AI-powered content creation tools, live video enhancement for broadcasters, personalized on-device experiences for streaming viewers, or interactive virtual production systems, multimodal AI must seamlessly coordinate vision, audio, and text processing with frame-accurate precision. Our expertise in multi-stream synchronization delivers the sub-second latency and consistent quality your applications require, essential for live broadcasting, real-time content generation, and responsive viewer experiences.

Our cross-platform engineering gives you flexibility. We work seamlessly across Qualcomm, Intel, AMD, MediaTek, and Synaptics chipsets, ensuring adaptability. We deliver production-ready code optimized for specific hardware, product requirements, and performance targets.

See our capabilities in action with our Multimodal Application for Neural based Avatar Video, a real-time on-device avatar generator. Running on edge devices powered by processors like the Snapdragon 8 Gen 3 and Synaptics SL1680, it demonstrates real-time latency for photorealistic, lip-synced avatars, entirely on-device. This showcase application proves what’s possible when system-level optimization meets sophisticated AI.

Partner with us to bring your edge AI vision to reality.